Out of the 70,000+ Alexa Skills and many thousands of Google Assistant Actions in the wild today, many of them return basic single turn responses and are fairly limited in scope to what they can do. This isn’t necessarily a bad thing — since their inception, voice assistant platforms have been passively training users to expect brief, limited responses. Currently, the best skills utilize short responses for low friction experiences; performing tasks in voice that are easier to complete than in other channels like web or mobile. In my work world, this includes things like quick updates on pricing or availability, simple status updates, and command-and-control scenarios (think smart home/ IoT).

But technology is an ever-changing thing, and as voice UIs evolve, users will come to expect more advanced and human-like conversations. Judging from fallback intent utterances in my company’s production skill, and the way my kids yell at Alexa, I think they already do 🙂 . This evolution is the focus of a number of new research efforts, including the Alexa Prize sponsored by Amazon.

As I am wont to do, I started pondering the intersection of voice user experience, data, and reasoning and how we might leverage graph technologies and knowledge graphs to power more complex, multi-turn user interactions that chat more like a human. After a couple of conversations with colleagues, I felt there was enough to go on to present the basic premise behind my thinking at a local data and analytics conference. My hypothesis:

Traversing the relationships between entities on a knowledge graph will create richer turn-by-turn machine-user interactions that enable more human-like conversations.

Building Blocks

To really put theory into practice, one needs a number of things to get started. I created and continue to refine a number of pieces to the puzzle. This includes:

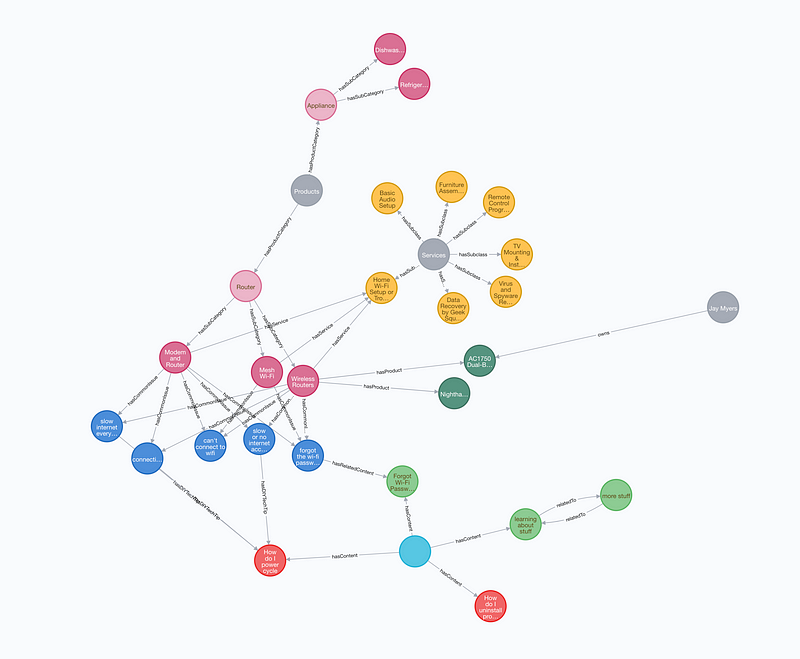

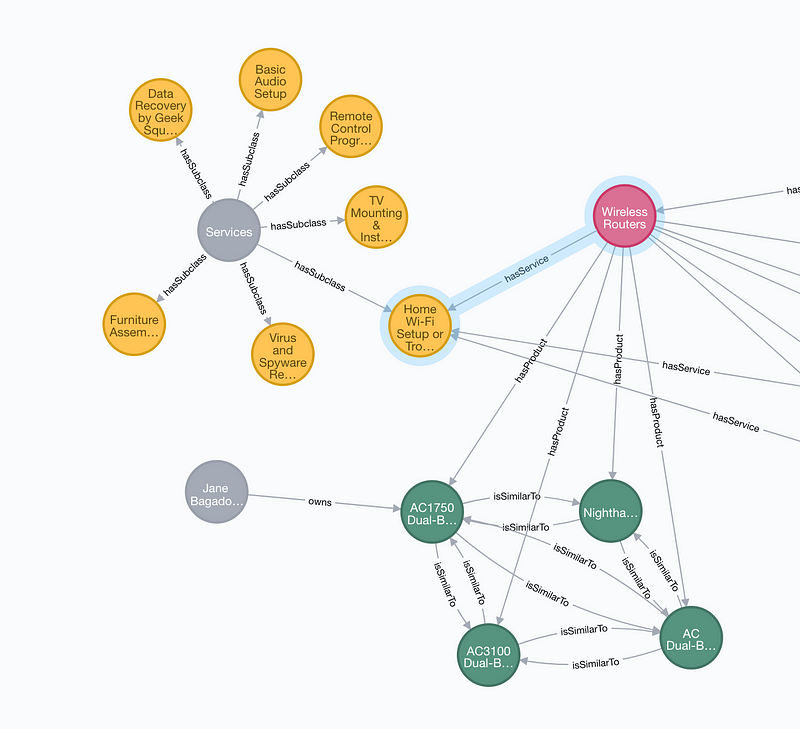

A Knowledge Graph. At a high level, knowledge graphs are interlinked sets of facts that describe real world entities and their interrelations. For the purposes of experimentation, I modeled facts into a small knowledge graph from various domains that would be applicable to my end user goal, including customer, content (informational articles, DIY content, FAQs), products, and tech support services topics. At the heart of knowledge graphs arerelationships between entities/ concepts. For example, my data contains connections like ones between a customer entity and the product they own (customer owns product), or a product entity and a common customer problem with it (product has common issue). When we follow these relationships between data entities and creatively string them together with user-friendly VUI, we can create better human-like turn-by-turn conversations. I’ll illustrate this concept in a section below.

Visualization of my small Knowledge Graph

Knowledge graphs rely on graph databases. There are a growing number of them available; what’s appropriate for your specific application and team will vary. I’ve been a long-time supporter of RDF-based solutions (see: Stardog, Neptune, Anzo, Cayley and others) and have prototyped with a number of vendors of this type. For this experiment, I’m giving Neo4j, a linked property graph (LPG), a whirl.

An intent. What sort of action is the user trying to take, and how would they invoke it to begin their journey? I’m focused on delivering more complex user scenarios that work best through multi-turn interactions, not one-and-done responses. I’ve seen a handful of unhandled requests for “technical support” in my production skill, so I’m focusing an initial effort on solving this customer problem. While tech support is pretty broad, it can serve as a really great example of a complex user experience focused on helping a customer troubleshoot and fix a common tech support issue by exploring tech tip topics and other connected concepts — a great match for a knowledge graph.

I’ve noticed a number of patterns have emerged in tech support related utterances. They are generally made up of two parts. First, they can be characterized by their carrier phrases — “help me fix”, “how to set up”, etc. Second, they contain the topic/ object attached to the carrier phrase. We use the carrier phrase to match the intent of the user, and the value of tech_topicas an initial search term and entry point into the knowledge graph. This experiment relies on the Alexa Skills Kit (ASK) to perform Automatic Speech Recognition (ASR) and match the user’s intent through it’s built in Natural Language Processing and Understanding (NLP/ NLU) capabilities.

The format of expected utterances: tech support carrier phrases and the object/ keyword or phrase of the utterance

Basic Alexa Skill. I won’t drill too deep into this part…I’m hosting a basic skill application as an AWS Lambda function. The goal is to build a more complex conversation with limited application logic — we should be relying on the relationships in the data to drive where the conversation goes, not coding logic into the skill code, which is akin to hard-coding, and forces the voice skill developer to cover many different use cases and usually results in a brittle conversational experience.

Putting the Pieces Together

For the purposes of demonstration, I’ve created a hypothetical customer persona, Jane Bagadonuts. She’s having trouble with a spotty wireless router. She starts a conversation with Alexa.

Jane: “Alexa, ask Jay’s skill to help me fix my wireless router.”

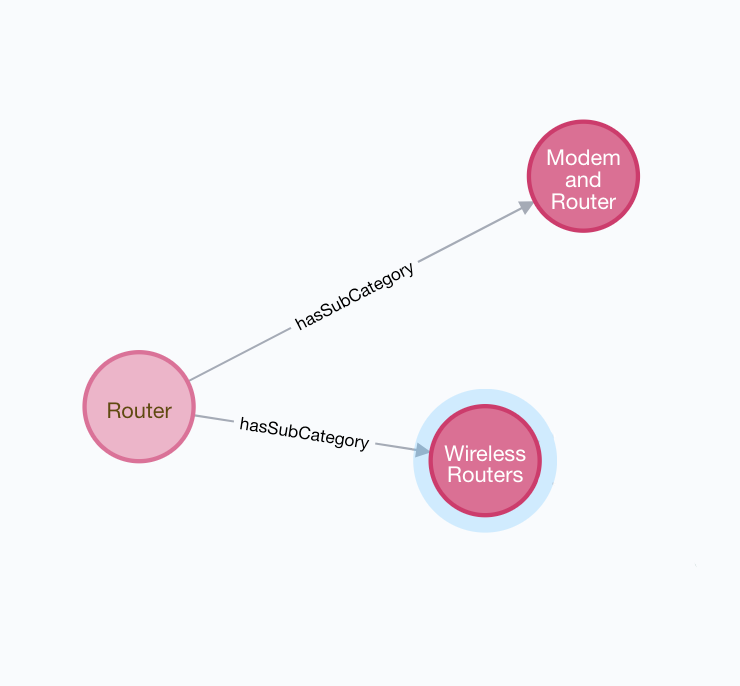

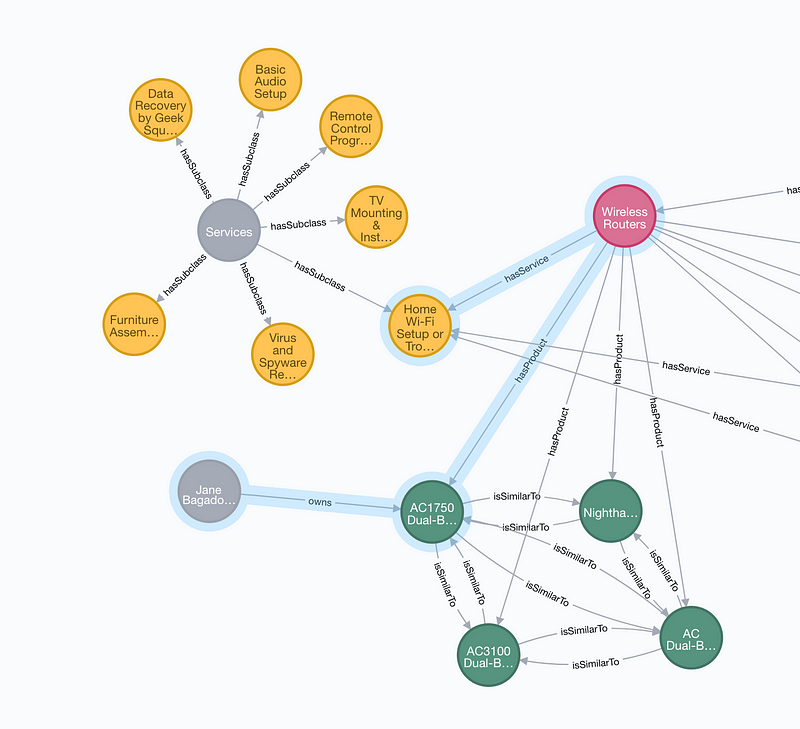

My application springs to life when my tech support intent is matched, based on the carrier phrase “help me fix”. The value of the tech_topic slot is passed and my skill performs an initial full text query on “wireless router”, which matches a wireless router product subcategory entity, as seen in the graph visualization below.

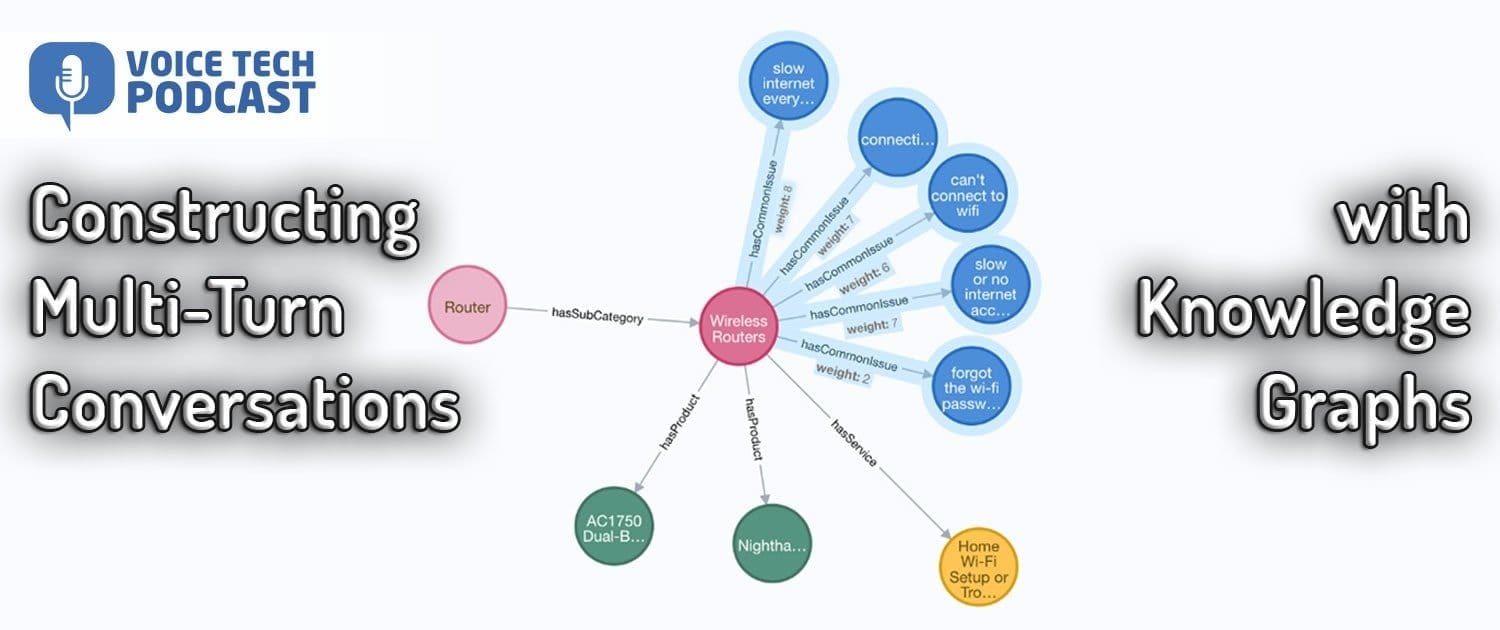

Full text query for “wireless routers” produces a wireless router product subcategory in my KG

My skill now has a starting point into my knowledge graph, but that’s just the beginning of the conversation. Since Jane invoked the skill through the tech support intent, I can infer the type of relationships we might initially want to explore with Jane to solve her problem.

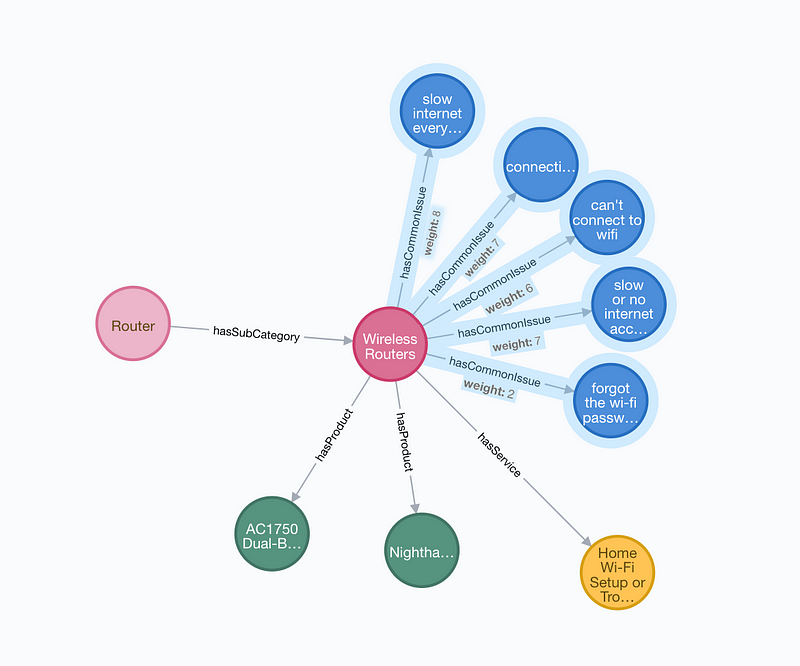

Another graph query exposes a number of relationships connected to the wireless router node, including wireless router products, service plans, and more. The most beneficial to Jane would be the hasCommonIssue relationship, and my query exposes some of the most common issues nodes connected to wireless routers. The data has a weight attribute on the edge — the skill will use the top two weighted common problems and serve them up to the user in the VUI to illicit a response and continue the conversation.

Exposing hasCommonIssue relationships

Alexa: “I’m sorry to hear you’re having problems…wireless routers are known for problems like slow internet everywhere, or connections dropping at random times. Do these describe your issue?”

Jane: “Yes”

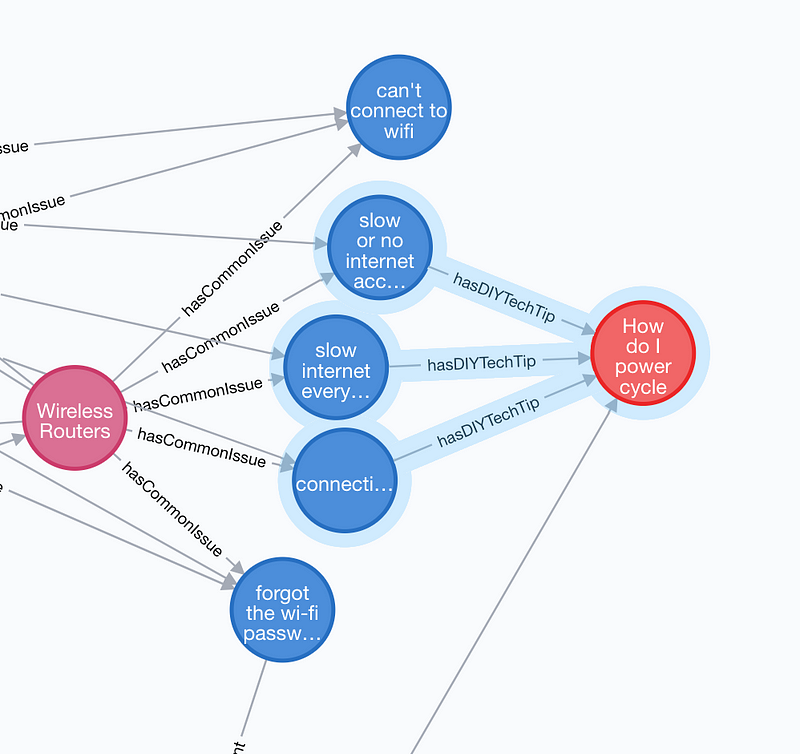

Excellent. I have an affirmative response from Jane which we take as permission to move forward. To further the conversation, my application queries for and explores relationships connected to common wireless router issues. Interestingly enough, the data in my KG points to a common relationship among the common issue nodes— I have a step-by-step DIY tech tip that I could present to Jane to help her solve her problem.

Uncovering DIY content through KG relationships

Alexa: “OK, I can lead you through step by step instructions on how to power cycle your modem and router. Do you want to give that a shot?”

Jane: “Not right now”

Jane doesn’t want to bother with the tech tip, but like a real human assistant worth their salt, we’re not going to give up so easily without finding a solution, or Jane telling us to get lost. Let’s back up and scan what other relationships I have connected to the wireless routers node that I can leverage to present options to her. I do this by querying all the relationships connected to wireless routers that aren’t the hasCommonIssue type.

The Wireless Router node and it’s connection to a service offering through the hasService relationship

This yields some pretty interesting results. First, it looks like the wireless routers node has a hasService relationship; a $99 service plan to offer Jane to repair the product and set it up in her home.

Exposing a link between the customer and a product in the KG

The wireless routers node also contains a number of hasProduct relationships to product nodes. Since Jane is authenticated to my skill, we can perform some additional querying to see if she might own one of the routers I sell. Voila — there’s a link between the Jane customer entity and a product entity — an “owns” relationship exists with a Netgear AC1750 Dual-Band wireless router. We’ll should validate this is the product she’s referring to in the conversation.

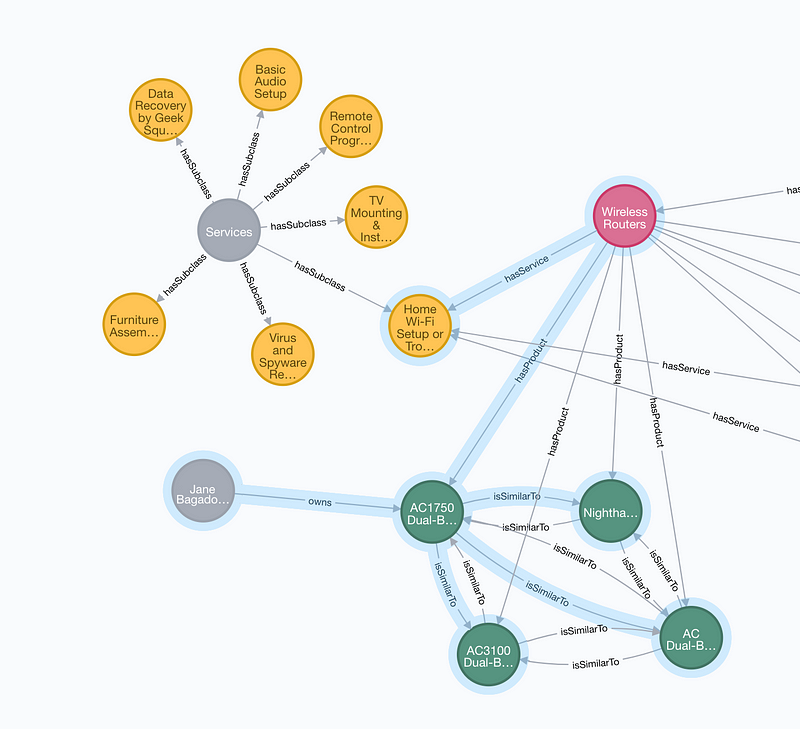

Querying further relationships between products in the graph to find similar products to offer to Jane

Once I know Jane owns the Netgear AC1750 and is having trouble with it, there may be an opportunity to offer and replace it with a like product. A further query on this product node yields a number of isSimilarTorelationships that connect it to other similar wireless routers.

Alexa: “Looks like you purchased a Netgear AC1750 from us. Is this the product you’re having trouble with?”

Jane: “Yep”

Alexa: “I can have someone come to your house and fix it for you for $99, or you can try a new Netgear Nighthawk AC1900 Dual-Band Wi-Fi 5G Router that I have on sale right now for $129.99. What do you think?”

Jane: “I’ll try a new router”

Alexa: “Great! That will be $133.41 with tax and will ship to your primary shipping address on file.”

Jane ends the interaction and awaits her new wireless router. Success!

Conclusion and Thoughts

Ms. Bagadonuts’s scenario could have played out in similar ways in real life. Imagine her initiating a human-to-human tech support chat, or physically going into a store seeking assistance. A help desk associate would help troubleshoot the issue and solve the problem, or suggest an alternative solution. The associate may switch modes from support to selling, which ultimately benefits both parties. Such situations happen every day — in this hypothetical, we’re simply replacing the human element with AI built to traverse a knowledge graph, mimicking the thought processes a person might go through to reach a satisfactory solution to a customer problem.

While my little skill’s a good start, there’s plenty of room for expansion and improvement.

The knowledge graph should be much bigger. Mature KGs will contain billions, even trillions of nodes and edges that represent the full breath and depth of an individual or organization’s knowledge. More entities and relationships will equate to richer virtual assistants that are more interactive and can solve complex, multi-turn customer problems.

Better VUI. I’ll admit, I’m not an elite voice user interface mind, although I aspire to be better! In my opinion, great voice experiences are a marriage between surfacing (technical) and conveying (UX/ CX) knowledge. My skill would benefit from some love from a skilled VUI designer.

Handling more complex responses from Jane. I’m guilty of building a skill that can only handle rudimentary yes/ no type responses. What if Jane responds with something more complex or unexpected? Having solid fallback scenarios is a core principle of voice. Can we determine if Jane is completely switching topics/ contexts and perform another broad full text query of my KG to take the conversation in a different direction, or is she talking about something in the current context that I don’t have an answer for?

With the learnings from this first trial run, I’m excited to pursue this idea further, including, but not limited to, creating additional experiments addressing other customer scenarios, ideation and integration into the voice product I’m currently building as part of my main “hustle”, or loaning the idea to academics interested in pursing competitions like the Alexa Prize (any takers?), all in the interest of shaping the future of the conversational voice landscape. Onward!